In recent years, there has been a remarkable advancement in AI technology, with ChatGPT emerging as a prominent example. This language model developed by OpenAI has demonstrated an unprecedented ability to generate human-like text, engaging in conversations and providing coherent responses. However, as impressive as ChatGPT’s capabilities are, there is still room for improvement. Advanced Prompt Engineering has emerged as a driving force in refining ChatGPT’s performance, enabling it to evolve further and push the boundaries of AI language models. By carefully crafting prompts and inputs, researchers and engineers can enhance the quality and control of ChatGPT’s responses, making it a more reliable and versatile tool for various applications. This article explores the significance of ChatGPT and the crucial role that Advanced Prompt Engineering plays in unlocking its full potential, ultimately driving the evolution of AI.

OpenAI has been instrumental in developing revolutionary tools like the OpenAI Gym, designed for training reinforcement algorithms, and GPT-n models. The spotlight is also on DALL-E, an AI model that crafts images from textual inputs. One such model that has garnered considerable attention is OpenAI’s ChatGPT, a shining exemplar in the realm of Large Language Models.

You are viewing: ChatGPT & Advanced Prompt Engineering: Driving the AI Evolution

GPT-4: Prompt Engineering

ChatGPT has transformed the chatbot landscape, offering human-like responses to user inputs and expanding its applications across domains – from software development and testing to business communication, and even the creation of poetry.

In the hands of businesses and individuals, GPT-4, for instance, could serve as an inexhaustible knowledge reservoir, proficient in subjects ranging from Mathematics and Biology to Legal Studies. Such sophisticated and accessible AI models are poised to redefine the future of work, learning, and creativity.

Generative models like GPT-4 can produce new data based on existing inputs. This distinguishing attribute lets them undertake diverse tasks, including generating text, images, music, and video.

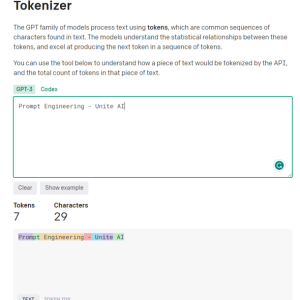

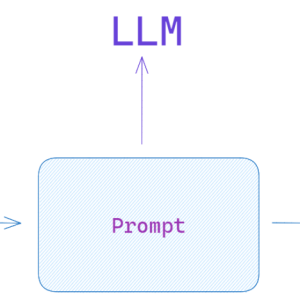

In the context of ChatGPT and OpenAI models, a prompt is the input that users provide to the models, usually in the form of text. Prompts serve as the primary means of controlling the output generated by these models, making them crucial for successful and relevant conversations.

The Impact of Prompt Quality

Using well-defined prompts is the key to engaging in useful and meaningful conversations with AI systems. Poorly-defined prompts can result in output that is not helpful to the user and may even lead to misleading content.

To demonstrate the impact of prompt quality, let’s compare two different prompts given to ChatGPT. The first prompt, poorly-defined and assuming prior knowledge, generates a potentially unhelpful response for the user. On the other hand, the second prompt provides more context and examples, ensuring a more relevant and understandable response.

Prompt 1: “Tell me about Convolutional Neural Networks.”

Response 1: “Convolutional Neural Networks (CNNs) are multi-layer perceptron networks that consist of fully connected layers and pooling layers. They are commonly used in image recognition tasks. The process involves applying filters to input images and downscaling them using pooling layers.”

Prompt 2: “I want to learn about Convolutional Neural Networks, but I have no prior knowledge of Artificial Neural Networks. Can you please provide a beginner-friendly explanation and an example of how CNNs are used in image recognition?”

Response 2: “Convolutional Neural Networks (CNNs) are a type of neural network primarily used for image recognition tasks. Unlike other neural networks, they leverage convolutional layers and pooling layers to process images. Imagine you have an image of a cat. CNNs can identify different features in the image, such as whiskers, ears, and tail, by applying filters and downsampling the image using pooling layers. This process makes CNNs highly effective for recognizing objects in images.”

By comparing the two responses, it is evident that a well-defined prompt leads to a more relevant and user-friendly response. Prompt design and engineering are growing disciplines that aim to optimize the output quality of AI models like ChatGPT.

In the following sections of this article, we will delve into the realm of advanced methodologies aimed at refining Large Language Models (LLMs), such as prompt engineering techniques and tactics. These include few-shot learning, ReAct, chain-of-thought, RAG, and more.

Advanced Engineering Techniques

Before we proceed, it’s important to understand a key issue with LLMs, referred to as ‘hallucination’. In the context of LLMs, ‘hallucination’ signifies the tendency of these models to generate outputs that might seem reasonable but are not rooted in factual reality or the given input context.

This problem was starkly highlighted in a recent court case where a defense attorney used ChatGPT for legal research. The AI tool, faltering due to its hallucination problem, cited non-existent legal cases. This misstep had significant repercussions, causing confusion and undermining credibility during the proceedings. This incident serves as a stark reminder of the urgent need to address the issue of ‘hallucination’ in AI systems.

Our exploration into prompt engineering techniques aims to improve these aspects of LLMs. By enhancing their efficiency and safety, we pave the way for innovative applications such as information extraction. Furthermore, it opens doors to seamlessly integrating LLMs with external tools and data sources, broadening the range of their potential uses.

Zero and Few-Shot Learning: Optimizing with Examples

Generative Pretrained Transformers (GPT-3) marked an important turning point in the development of Generative AI models, as it introduced the concept of ‘few-shot learning.’ This method was a game-changer due to its capability of operating effectively without the need for comprehensive fine-tuning. The GPT-3 framework is discussed in the paper, “Language Models are Few Shot Learners” where the authors demonstrate how the model excels across diverse use cases without necessitating custom datasets or code.

Unlike fine-tuning, which demands continuous effort to solve varying use cases, few-shot models demonstrate easier adaptability to a broader array of applications. While fine-tuning might provide robust solutions in some cases, it can be expensive at scale, making the use of few-shot models a more practical approach, especially when integrated with prompt engineering.

Imagine you’re trying to translate English to French. In few-shot learning, you would provide GPT-3 with a few translation examples like “sea otter -> loutre de mer”. GPT-3, being the advanced model it is, is then able to continue providing accurate translations. In zero-shot learning, you wouldn’t provide any examples, and GPT-3 would still be able to translate English to French effectively.

The term ‘few-shot learning’ comes from the idea that the model is given a limited number of examples to ‘learn’ from. It’s important to note that ‘learn’ in this context doesn’t involve updating the model’s parameters or weights, rather, it influences the model’s performance.

Few Shot Learning as Demonstrated in GPT-3 Paper

Zero-shot learning takes this concept a step further. In zero-shot learning, no examples of task completion are provided in the model. The model is expected to perform well based on its initial training, making this methodology ideal for open-domain question-answering scenarios such as ChatGPT.

In many instances, a model proficient in zero-shot learning can perform well when provided with few-shot or even single-shot examples. This ability to switch between zero, single, and few-shot learning scenarios underlines the adaptability of large models, enhancing their potential applications across different domains.

Zero-shot learning methods are becoming increasingly prevalent. These methods are characterized by their capability to recognize objects unseen during training. Here is a practical example of a Few-Shot Prompt:

See more : 10 Best ChatGPT Prompts for Developing Content Strategy

"Translate the following English phrases to French:

'sea otter' translates to 'loutre de mer'

'sky' translates to 'ciel'

'What does 'cloud' translate to in French?'"

By providing the model with a few examples and then posing a question, we can effectively guide the model to generate the desired output. In this instance, GPT-3 would likely correctly translate ‘cloud’ to ‘nuage’ in French.

We will delve deeper into the various nuances of prompt engineering and its essential role in optimizing model performance during inference. We’ll also look at how it can be effectively used to create cost-effective and scalable solutions across a broad array of use cases.

As we further explore the complexity of prompt engineering techniques in GPT models, it’s important to highlight our last post ‘Essential Guide to Prompt Engineering in ChatGPT‘. This guide provides insights into the strategies for instructing AI models effectively across a myriad of use cases.

In our previous discussions, we delved into fundamental prompt methods for large language models (LLMs) such as zero-shot and few-shot learning, as well as instruction prompting. Mastering these techniques is crucial for navigating the more complex challenges of prompt engineering that we’ll explore here.

Few-shot learning can be limited due to the restricted context window of most LLMs. Moreover, without the appropriate safeguards, LLMs can be misled into delivering potentially harmful output. Plus, many models struggle with reasoning tasks or following multi-step instructions.

Given these constraints, the challenge lies in leveraging LLMs to tackle complex tasks. An obvious solution might be to develop more advanced LLMs or refine existing ones, but that could entail substantial effort. So, the question arises: how can we optimize current models for improved problem-solving?

Equally fascinating is the exploration of how this technique interfaces with creative applications in Unite AI’s ‘Mastering AI Art: A Concise Guide to Midjourney and Prompt Engineering‘ which describes how the fusion of art and AI can result in awe-inspiring art.

Chain-of-thought Prompting

Chain-of-thought prompting leverages the inherent auto-regressive properties of large language models (LLMs), which excel at predicting the next word in a given sequence. By prompting a model to elucidate its thought process, it induces a more thorough, methodical generation of ideas, which tends to align closely with accurate information. This alignment stems from the model’s inclination to process and deliver information in a thoughtful and ordered manner, akin to a human expert walking a listener through a complex concept. A simple statement like “walk me through step by step how to…” is often enough to trigger this more verbose, detailed output.

Zero-shot Chain-of-thought Prompting

While conventional CoT prompting requires pre-training with demonstrations, an emerging area is zero-shot CoT prompting. This approach, introduced by Kojima et al. (2022), innovatively adds the phrase “Let’s think step by step” to the original prompt.

Let’s create an advanced prompt where ChatGPT is tasked with summarizing key takeaways from AI and NLP research papers.

In this demonstration, we will use the model’s ability to understand and summarize complex information from academic texts. Using the few-shot learning approach, let’s teach ChatGPT to summarize key findings from AI and NLP research papers:

1. Paper Title: "Attention Is All You Need"

Key Takeaway: Introduced the transformer model, emphasizing the importance of attention mechanisms over recurrent layers for sequence transduction tasks.

2. Paper Title: "BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding"

Key Takeaway: Introduced BERT, showcasing the efficacy of pre-training deep bidirectional models, thereby achieving state-of-the-art results on various NLP tasks.

Now, with the context of these examples, summarize the key findings from the following paper:

Paper Title: "Prompt Engineering in Large Language Models: An Examination"

This prompt not only maintains a clear chain of thought but also makes use of a few-shot learning approach to guide the model. It ties into our keywords by focusing on the AI and NLP domains, specifically tasking ChatGPT to perform a complex operation which is related to prompt engineering: summarizing research papers.

ReAct Prompt

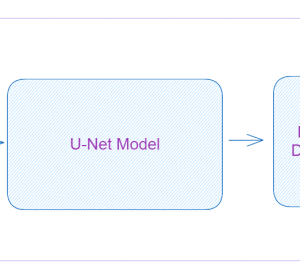

React, or “Reason and Act”, was introduced by Google in the paper “ReAct: Synergizing Reasoning and Acting in Language Models“, and revolutionized how language models interact with a task, prompting the model to dynamically generate both verbal reasoning traces and task-specific actions.

Imagine a human chef in the kitchen: they not only perform a series of actions (cutting vegetables, boiling water, stirring ingredients) but also engage in verbal reasoning or inner speech (“now that the vegetables are chopped, I should put the pot on the stove”). This ongoing mental dialogue helps in strategizing the process, adapting to sudden changes (“I’m out of olive oil, I’ll use butter instead”), and remembering the sequence of tasks. React mimics this human ability, enabling the model to quickly learn new tasks and make robust decisions, just like a human would under new or uncertain circumstances.

React can tackle hallucination, a common issue with Chain-of-Thought (CoT) systems. CoT, although an effective technique, lacks the capacity to interact with the external world, which could potentially lead to fact hallucination and error propagation. React, however, compensates for this by interfacing with external sources of information. This interaction allows the system to not only validate its reasoning but also update its knowledge based on the latest information from the external world.

The fundamental working of React can be explained through an instance from HotpotQA, a task requiring high-order reasoning. On receiving a question, the React model breaks down the question into manageable parts and creates a plan of action. The model generates a reasoning trace (thought) and identifies a relevant action. It may decide to look up information about the Apple Remote on an external source, like Wikipedia (action), and updates its understanding based on the obtained information (observation). Through multiple thought-action-observation steps, ReAct can retrieve information to support its reasoning while refining what it needs to retrieve next.

Note:

HotpotQA is a dataset, derived from Wikipedia, composed of 113k question-answer pairs designed to train AI systems in complex reasoning, as questions necessitate reasoning over multiple documents to answer. On the other hand, CommonsenseQA 2.0, constructed through gamification, includes 14,343 yes/no questions and is designed to challenge AI’s understanding of common sense, as the questions are intentionally crafted to mislead AI models.

The process could look something like this:

- Thought: “I need to search for the Apple Remote and its compatible devices.”

- Action: Searches “Apple Remote compatible devices” on an external source.

- Observation: Obtains a list of devices compatible with the Apple Remote from the search results.

- Thought: “Based on the search results, several devices, apart from the Apple Remote, can control the program it was originally designed to interact with.”

The result is a dynamic, reasoning-based process that can evolve based on the information it interacts with, leading to more accurate and reliable responses.

Comparative visualization of four prompting methods – Standard, Chain-of-Thought, Act-Only, and ReAct, in solving HotpotQA and AlfWorld (https://arxiv.org/pdf/2210.03629.pdf)

Designing React agents is a specialized task, given its ability to achieve intricate objectives. For instance, a conversational agent, built on the base React model, incorporates conversational memory to provide richer interactions. However, the complexity of this task is streamlined by tools such as Langchain, which has become the standard for designing these agents.

Context-faithful Prompting

See more : Analogical & Step-Back Prompting: A Dive into Recent Advancements by Google DeepMind

The paper ‘Context-faithful Prompting for Large Language Models‘ underscores that while LLMs have shown substantial success in knowledge-driven NLP tasks, their excessive reliance on parametric knowledge can lead them astray in context-sensitive tasks. For example, when a language model is trained on outdated facts, it can produce incorrect answers if it overlooks contextual clues.

This problem is apparent in instances of knowledge conflict, where the context contains facts differing from the LLM’s pre-existing knowledge. Consider an instance where a Large Language Model (LLM), primed with data before the 2022 World Cup, is given a context indicating that France won the tournament. However, the LLM, relying on its pretrained knowledge, continues to assert that the previous winner, i.e., the team that won in the 2018 World Cup, is still the reigning champion. This demonstrates a classic case of ‘knowledge conflict’.

In essence, knowledge conflict in an LLM arises when new information provided in the context contradicts the pre-existing knowledge the model has been trained on. The model’s tendency to lean on its prior training rather than the newly provided context can result in incorrect outputs. On the other hand, hallucination in LLMs is the generation of responses that may seem plausible but are not rooted in the model’s training data or the provided context.

Another issue arises when the provided context doesn’t contain enough information to answer a question accurately, a situation known as prediction with abstention. For instance, if an LLM is asked about the founder of Microsoft based on a context that does not provide this information, it should ideally abstain from guessing.

More Knowledge Conflict and the Power of Abstention Examples

To improve the contextual faithfulness of LLMs in these scenarios, the researchers proposed a range of prompting strategies. These strategies aim to make the LLMs’ responses more attuned to the context rather than relying on their encoded knowledge.

One such strategy is to frame prompts as opinion-based questions, where the context is interpreted as a narrator’s statement, and the question pertains to this narrator’s opinion. This approach refocuses the LLM’s attention to the presented context rather than resorting to its pre-existing knowledge.

Adding counterfactual demonstrations to prompts has also been identified as an effective way to increase faithfulness in cases of knowledge conflict. These demonstrations present scenarios with false facts, which guide the model to pay closer attention to the context to provide accurate responses.

Instruction fine-tuning

Instruction fine-tuning is a supervised learning phase that capitalizes on providing the model with specific instructions, for instance, “Explain the distinction between a sunrise and a sunset.” The instruction is paired with an appropriate answer, something along the lines of, “A sunrise refers to the moment the sun appears over the horizon in the morning, while a sunset marks the point when the sun disappears below the horizon in the evening.” Through this method, the model essentially learns how to adhere to and execute instructions.

This approach significantly influences the process of prompting LLMs, leading to a radical shift in the prompting style. An instruction fine-tuned LLM permits immediate execution of zero-shot tasks, providing seamless task performance. If the LLM is yet to be fine-tuned, a few-shot learning approach may be required, incorporating some examples into your prompt to guide the model toward the desired response.

“Instruction Tuning with GPT-4′ discusses the attempt to use GPT-4 to generate instruction-following data for fine-tuning LLMs. They used a rich dataset, comprising 52,000 unique instruction-following entries in both English and Chinese.

The dataset plays a pivotal role in instruction tuning LLaMA models, an open-source series of LLMs, resulting in enhanced zero-shot performance on new tasks. Noteworthy projects such as Stanford Alpaca have effectively employed Self-Instruct tuning, an efficient method of aligning LLMs with human intent, leveraging data generated by advanced instruction-tuned teacher models.

The primary aim of instruction tuning research is to boost the zero and few-shot generalization abilities of LLMs. Further data and model scaling can provide valuable insights. With the current GPT-4 data size at 52K and the base LLaMA model size at 7 billion parameters, there is enormous potential to collect more GPT-4 instruction-following data and combine it with other data sources leading to the training of larger LLaMA models for superior performance.

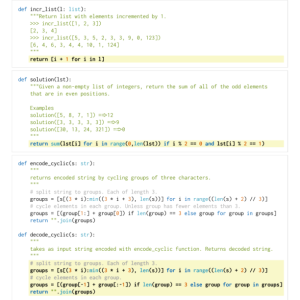

STaR: Bootstrapping Reasoning With Reasoning

The potential of LLMs is particularly visible in complex reasoning tasks such as mathematics or commonsense question-answering. However, the process of inducing a language model to generate rationales—a series of step-by-step justifications or “chain-of-thought”—has its set of challenges. It often requires the construction of large rationale datasets or a sacrifice in accuracy due to the reliance on only few-shot inference.

“Self-Taught Reasoner” (STaR) offers an innovative solution to these challenges. It utilizes a simple loop to continuously improve a model’s reasoning capability. This iterative process starts with generating rationales to answer multiple questions using a few rational examples. If the generated answers are incorrect, the model tries again to generate a rationale, this time giving the correct answer. The model is then fine-tuned on all the rationales that resulted in correct answers, and the process repeats.

STaR methodology, demonstrating its fine-tuning loop and a sample rationale generation on CommonsenseQA dataset (https://arxiv.org/pdf/2203.14465.pdf)

To illustrate this with a practical example, consider the question “What can be used to carry a small dog?” with answer choices ranging from a swimming pool to a basket. The STaR model generates a rationale, identifying that the answer must be something capable of carrying a small dog and landing on the conclusion that a basket, designed to hold things, is the correct answer.

STaR’s approach is unique in that it leverages the language model’s pre-existing reasoning ability. It employs a process of self-generation and refinement of rationales, iteratively bootstrapping the model’s reasoning capabilities. However, STaR’s loop has its limitations. The model may fail to solve new problems in the training set because it receives no direct training signal for problems it fails to solve. To address this issue, STaR introduces rationalization. For each problem the model fails to answer correctly, it generates a new rationale by providing the model with the correct answer, which enables the model to reason backward.

STaR, therefore, stands as a scalable bootstrapping method that allows models to learn to generate their own rationales while also learning to solve increasingly difficult problems. The application of STaR has shown promising results in tasks involving arithmetic, math word problems, and commonsense reasoning. On CommonsenseQA, STaR improved over both a few-shot baseline and a baseline fine-tuned to directly predict answers and performed comparably to a model that is 30× larger.

Tagged Context Prompts

The concept of ‘Tagged Context Prompts‘ revolves around providing the AI model with an additional layer of context by tagging certain information within the input. These tags essentially act as signposts for the AI, guiding it on how to interpret the context accurately and generate a response that is both relevant and factual.

Imagine you are having a conversation with a friend about a certain topic, let’s say ‘chess’. You make a statement and then tag it with a reference, such as ‘(source: Wikipedia)’. Now, your friend, who in this case is the AI model, knows exactly where your information is coming from. This approach aims to make the AI’s responses more reliable by reducing the risk of hallucinations, or the generation of false facts.

A unique aspect of tagged context prompts is their potential to improve the ‘contextual intelligence’ of AI models. For instance, the paper demonstrates this using a diverse set of questions extracted from multiple sources, like summarized Wikipedia articles on various subjects and sections from a recently published book. The questions are tagged, providing the AI model with additional context about the source of the information.

This extra layer of context can prove incredibly beneficial when it comes to generating responses that are not only accurate but also adhere to the context provided, making the AI’s output more reliable and trustworthy.

Conclusion: A Look into Promising Techniques and Future Directions

OpenAI’s ChatGPT showcases the uncharted potential of Large Language Models (LLMs) in tackling complex tasks with remarkable efficiency. Advanced techniques such as few-shot learning, ReAct prompting, chain-of-thought, and STaR, allow us to harness this potential across a plethora of applications. As we dig deeper into the nuances of these methodologies, we discover how they’re shaping the landscape of AI, offering richer and safer interactions between humans and machines.

Despite the challenges such as knowledge conflict, over-reliance on parametric knowledge, and potential for hallucination, these AI models, with the right prompt engineering, have proven to be transformative tools. Instruction fine-tuning, context-faithful prompting, and integration with external data sources further amplify their capability to reason, learn, and adapt.

That concludes the article: ChatGPT & Advanced Prompt Engineering: Driving the AI Evolution

I hope this article has provided you with valuable knowledge. If you find it useful, feel free to leave a comment and recommend our website!

Click here to read other interesting articles: AI

Source: duanetoops.com

#ChatGPT #Advanced #Prompt #Engineering #Driving #Evolution

Source: https://duanetoops.com

Category: Prompt Engineering